Advanced sensors are powering the age of autonomous vehicles; but current solutions have their limits. Existing camera-based solutions are constrained in their detection range and depth accuracy, as well as performance in low-light conditions. LiDAR solutions are restricted by low angular resolution, limited object classification, large dimensions and high cost. Radar solutions are limited in their resolution and FoV, and have limited object classification capabilities.

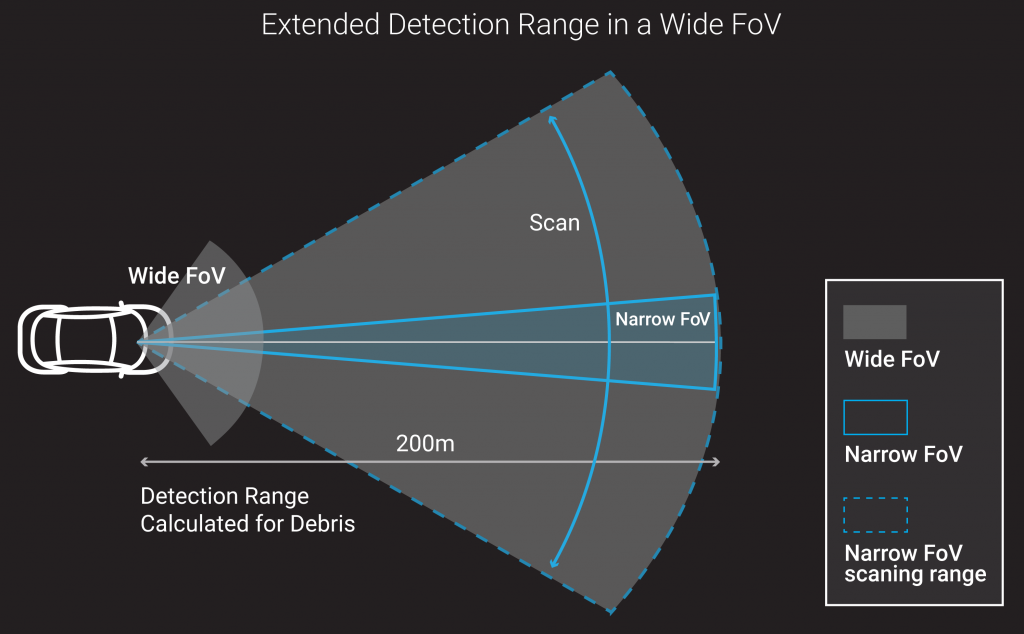

These limitations negatively affect the autonomous vehicle’s ability to independently negotiate complex driving situations, such as seeing further down curves or detecting small objects at a large distance. Expanding the sensor’s FoV shortens its range, requiring additional sensors and processing power to compensate, which adds to the overall cost. Next-generation autonomous vehicles and ADAS require a sensor suite that can achieve best object classification and high angular resolution without compromising the FoV.

Roadrunner by Corephotonics is a next-generation sensing solution that combines a stationary wide FoV camera with a narrow FoV scanning camera to deliver both wide FoV and long detection range of up to 300 meters. Roadrunner’s innovation significantly extends the detection and classification distance of visual data, offers highest angular resolution at a wider FoV, lowers cost, features a small physical footprint, and importantly, provides a passenger sense of safety.

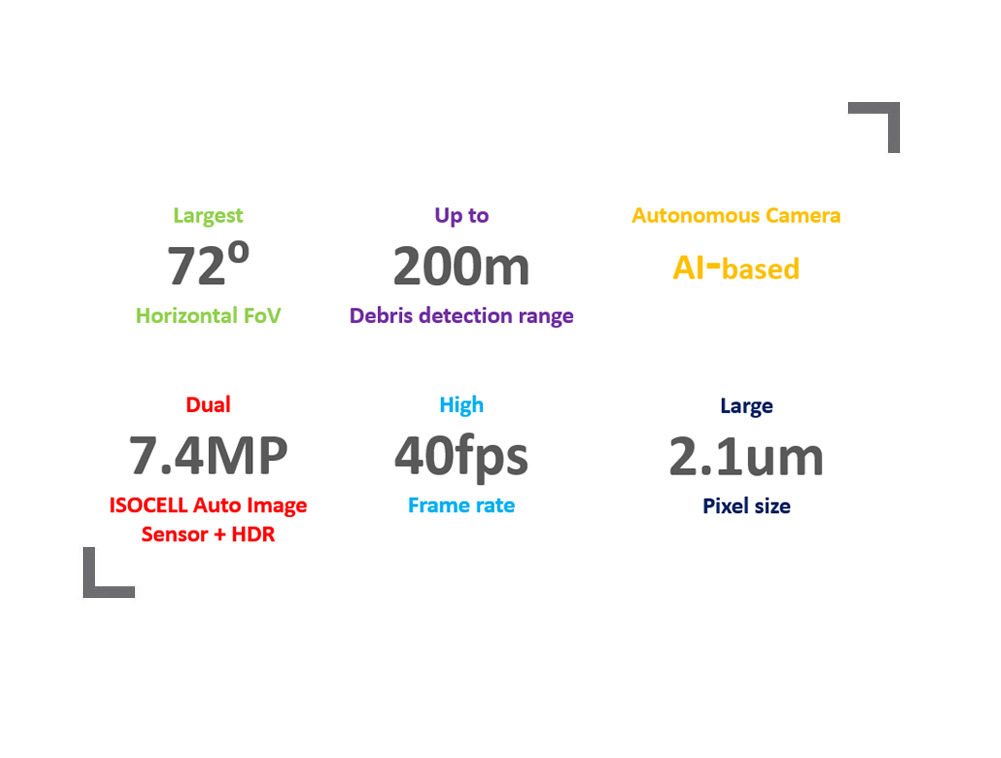

Built on Corephotonics’ extensive experience with multi-aperture camera systems and complementary algorithms, Roadrunner’s dual camera capabilities effectively utilize deep learning algorithms to determine the ROI of the scanning camera at any given moment. These advances address a common pain-point in the industry; with Roadrunner, autonomous vehicles can classify pedestrians, vehicles, traffic lights and signs – even on a curvy road – and better detect small debris as far as 200m away.

Roadrunner’s robust design is geared to meet all automotive-grade standards. The camera features an open architecture that can be integrated and fused with other sensors, such as LiDAR or radar – as well as other mapping and localization solutions – making the solution highly customizable and suitable for multiple levels of autonomy, from L2 and above.

Image quality

Camera hardware

Computer Vision